Last updated on June 4, 2026

Agile resolves human communication failures. But what happens when the humans are taken out of the loop?

I shipped my first product in 1998. Waterfall. 3 months of requirements documents, a month of design specs, and 4 months of engineering – followed by a go-live that revealed, with painful precision, that the stakeholder had wanted something slightly but critically different. We had built the right system for the wrong mental model. That experience wasn’t unique. It was the norm. And it’s exactly why a group of engineers and thinkers gathered in a Utah ski lodge in February 2001 and wrote the Agile Manifesto.

26 years later, I’m watching AI design agents and AI coding agents enter the picture – and for the first time in my career, I’m genuinely asking: is Agile needed in age of AI Agents?

What problem was Agile actually solving?

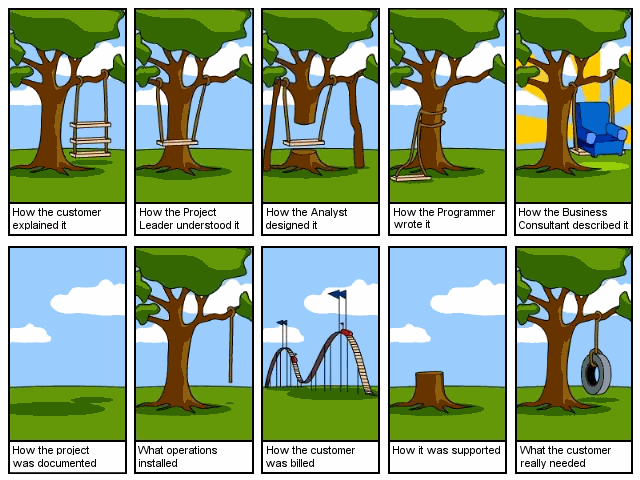

Let’s be precise about this, because the mythology has gotten sloppy. Agile was not primarily needed to ship faster. It was necessary to fail faster and cheaper – to surface the inevitable miscommunication between what a human customer says, what a human analyst interprets, what a human architect designs, and what a human engineer builds, before all four layers of telephone had calcified into production code. A classic tree swing cartoon comes to mind:

“Agile is not a delivery methodology. It is a miscommunication detection system with short feedback loops built in.”

The sprint review exists not because two weeks is the ideal unit of software delivery. It exists because two weeks is short enough that when you show a stakeholder the wrong thing, you haven’t wasted six months finding out.

I joined a consulting engineering team late in the lifecycle of a dot-com startup, govWorks.com. By that point, the team had already spent months building the system strictly to specification.

Just 4 days before launch, the business team uncovered a critical issue: the “ask” feature-powered by Ask Jeeves – wasn’t behaving as intended. A simple query like “parking” returned a response along the lines of, “Do you mean last name Park or first name Park?” Not exactly helpful for users trying to find parking information – and certainly not the kind of surprise you want days before going live (see 57:25 mark of the documentary).

It was a stark reminder that validating real user interactions early and often isn’t optional. Had this capability been tested and iterated on sooner, the team could have avoided a costly, last-minute scramble.

The Dunbar Number sitting inside every sprint

Robin Dunbar’s research on cognitive limits – the famous “150 people” number – points to something deeper: humans have a finite bandwidth for maintaining stable, nuanced relationships of mutual understanding. In software teams, this manifests not as headcount but as conceptual fidelity across handoffs.

By the time “the customer needs a flexible dashboard” reaches the engineer who builds the charting component, it may have passed through: a product manager’s prioritization lens, a UX designer’s affordance choices, a tech lead’s architectural opinions, and a sprint planner’s scope reduction. Each human adds signal and subtracts fidelity. Agile’s sprints don’t eliminate these handoffs. They just schedule collision points – the sprint review, the retrospective, the backlog refinement – where the accumulated drift gets corrected before it compounds.

Enter the agents

Now consider what changes when AI design agents and AI coding agents enter the loop. Companies like Cursor, Devin, Loveable, and emerging vertical design agents are beginning to demonstrate something remarkable: the handoff between design intent and implementation can, under the right conditions, achieve a fidelity that human handoffs structurally cannot.

- Lossy translation at each layer

- Implicit assumptions fill gaps

- Context degrades with team size

- Sprints exist to detect drift

- Velocity measured in story points

- Structured intent preserves semantics

- Explicit constraints, no implicit fills

- Context is computable, not social

- Drift is checkable against a spec

- Velocity measured in shipped behavior

Imagine a design agent that doesn’t just produce pixel layouts but emits a semantic intent graph – capturing not just “button, blue, 48px” but “primary CTA for authenticated checkout, accessible label, error state if cart is empty.” A coding agent consuming that graph isn’t guessing intent from a Figma frame. It’s executing a specification. The gap that Agile sprints exist to catch may simply not open between those two agents.

The remaining gap: humans still write the requirements

Here is where I want to pump the brakes on the triumphalism, because I’ve seen this movie before. Every generation of abstraction – 4GLs in the 90s, model-driven engineering in the 2000s – promised to compress or eliminate the distance between business intent and running code. They all failed at the same point: the requirements themselves.

The AI agent handoff solves the translation problem between design and code. It does not solve the articulation problem between a human stakeholder’s actual need and the requirements they can express in language.

“When AI closes the design-to-code gap, we don’t eliminate the need for feedback loops – we compress them upstream, where they should have always been.”

The value of the sprint review was never really about the code. It was about showing a human something tangible so that they could recognize the gap between what they said and what they meant. AI agents don’t help with that. They may, however, make the tangible artifact available so much faster that the feedback loop becomes tighter, not obsolete.

What actually changes: a reframe of the sprint

In a world of high-fidelity AI agent pipelines, the Agile sprint doesn’t die – it migrates. The sprint review shifts from “did engineering build what design intended?” (a question AI agents answer well) to “did the team understand what the customer actually needed?” (a question only humans can answer).

- 01 Requirement sprints replace build sprints. The cadence moves to rapid prototyping of intent – getting a stakeholder to react to a working artifact in 48 hours rather than two weeks, because the build is now near-instant.

- 02 Product managers become prompt architects. The highest-leverage role shifts to the human who can translate stakeholder ambiguity into structured, unambiguous intent specifications that AI agents can execute with fidelity.

- 03 “Definition of Done” gets renegotiated. When an AI can write tests, code, and documentation from a spec, done is no longer about completeness – it’s about correctness of the original intent statement.

- 04 Teams shrink but the PM-to-engineer ratio inverts. Less engineering headcount needed per feature; more strategic product thinking needed per unit of AI output. The Dunbar limit on team size stops being a staffing constraint and becomes a coordination advantage.

- 05 Retrospectives focus on intent quality, not process. “Why did the agent produce the wrong behavior?” will trace back to a poorly specified intent — not an engineering failure. The retrospective becomes a requirements postmortem.

Is this the beginning of the end for Agile?

No. It’s the beginning of the end for the parts of Agile that exist only to compensate for human communication overhead. The stand-up that exists to sync engineers who are blocked by ambiguity? Obsolete if the agent resolves ambiguity from a spec. The sprint demo that exists to check if engineering misread the wireframe? Obsolete if an agent consumed the wireframe directly.

But the sprint review that exists to ask “is this what you actually wanted?” – that endures. Not because we choose to keep it. Because that question will never stop being hard to answer until you show someone something they can react to.

Agile was a workaround for a specific class of human failure: lossy communication across professional handoffs, compounded over time, caught too late. AI agents close some of those handoffs with a fidelity humans cannot match. What remains – the gap between what a customer says and what they mean – is not a communication problem. It’s a cognition problem. And no agent, however capable, can close a gap that lives entirely inside a human mind they’ve never modeled. The sprint lives on. Its purpose just got cleaner.